Amy Sprague

March 10, 2026

With support from the National Science Foundation, A&A graduate student Mark Dunn’s simulations capture particle-scale effects previous plasma models missed, with sights on better fusion reactor design.

Conceptual representation of plasma, with points representing charged particles and the mathematical framework used to model their interactions.

When Mark Dunn's plasma simulations finally ran after months of mathematical preparation, they revealed a discrepancy over previous models. The self-heating process essential for supercharging fusion reactions is far more nuanced than traditional models suggest.

Mark Dunn

"While our initial results suggest that alpha heating may not follow the simple paths we hypothesized, the high-fidelity data reveals something much more nuanced," says Dunn, a graduate researcher in A&A’s Computational Plasma Dynamics Lab. "We’ve identified a 'transitory period' where the energy from fusion doesn't just spread out evenly. Instead, it accelerates a small number of fuel particles to extreme velocities, giving them a much higher probability of triggering further reactions, an enhancement that simplified models completely miss."

Named for the alpha particles produced by fusion reactions, alpha heating is "the process by which a fusion plasma heats itself and maintains a self-sustaining burn," Dunn explains. Like the sun, a successful fusion reactor needs this self-heating to maintain the extreme temperatures required for continuous fusion. It will be alpha heating that will get fusion past breakeven, an elusive goal so far where the reaction releases more energy than what is put in, so modeling these reactions correctly is vital.

"These energetic particles can either fuse, or they can transfer their energy to the surrounding medium," Dunn explains. "And by transferring their energy to the surrounding medium, they're not so energetic anymore." Previous models didn't account for this competing dynamic. Improving these models matters because fusion energy represents one of our best hopes for abundant, clean power with no carbon emissions.

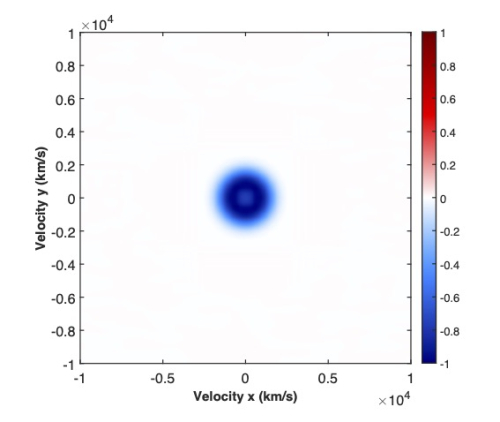

These high-fidelity maps illustrate the "give and take" of a nuclear fusion reaction. The Fuel Depletion image (left) shows a dark blue "sink" where fuel particles are consumed, while the Product Production image (right) reveals a bright red ring of newborn, high-velocity fusion products. This shift—consuming "slower" fuel and replacing it with a faster-moving energetic shell of particles—is the primary mechanism that injects heat into the plasma, a process known as alpha heating.

Just-right modeling

A fusion plasma is so densely packed that it contains roughly a billion particles in a single cubic nanometer. Across a typical experiment, this adds up to trillions of trillions of charged particles. To perfectly predict its behavior would require tracking every single particle's position and velocity, a task Dunn describes as "completely unfeasible" even for the world's most powerful supercomputers.

So researchers have relied on simplifications. Traditional "fluid models" describe plasmas through familiar concepts like density, temperature, and velocity, which treats the whole plasma as one fluid and works well enough when the plasma is in equilibrium. But these models struggle with the dynamic, particle-level effects that dominate high-performance fusion experiments when particles are most kinetic.

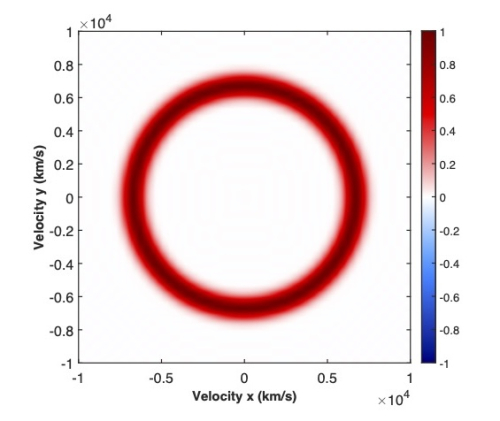

In this 3D map, the vertical axis represents the probability of finding a particle at a given velocity. The highest point of the hill sits at the center (0,0), confirming that the vast majority of particles move at relatively low, "average" speeds. However, most fusion reactions happen at the very edges of the slopes—the "high-energy tails." While the probability of finding particles here is very low, these rare, high-speed particles are the only ones moving fast enough to overcome electrical repulsion and slam together to fuse. Mark Dunn’s high-fidelity model tracks how these specific "tail" particles are used up, providing insights into the dynamics of nuclear fusion that traditional "average-speed" models simply cannot see.

“You cannot really see competing effects at the particle scale with a fluid model, where you only track density and temperature,” Dunn explains. “For example, you have no clear picture of how fusion rates change when particles behave in complex, non-uniform ways.”

Dunn's research takes a different path that uses a probabilistic representation. Instead of tracking the trillions of individual particles, he uses a kinetic description through a distribution function that captures the statistical behavior of all particles at once.

"It still contains some information about all particles, but in a single object," Dunn says. He employs a "collision operator" as a mathematical tool that tracks how particles bump into each other and exchange energy or produce fusion reactions. "It gives you the rate of change, or how this distribution function evolves over time, due to particle collisions alone."

To evaluate the collision operator, Dunn uses a fast spectral method—a mathematical “engine” that translates particle dynamics and interactions into the language of waves. By treating the plasma as a series of frequencies rather than a collection of dots, the method can identify hidden structures and avoid redundant calculations.

“I have learned to enjoy the process even when the research gets really abstract. I enjoy the math and physics for what they are. Seeing a simulation deliver results, either expected or unexpected, is really satisfying.”

Instead of computing every particle interaction from scratch, it finds repeating patterns and uses them to evaluate many interactions at once, dramatically reducing the amount of computation required. This turns a calculation that would normally be impossible for even a supercomputer into a task manageable for a modern graphics card, providing a high-fidelity look at fusion in record time.

A related technique called low-rank decomposition compresses the data further, similar to how a JPEG stores a photo using fewer parameters without losing essential detail. "Low-rank decomposition reduces the memory required to store the data," Dunn says, "but also by compressing the data, you make the operations on that data cheaper. So it's not just memory but also computational cost that decreases."

Increasing our modeling fidelity improves our ability to understand experimental observations and our predictive accuracy, which could mean the difference between a reactor design that works and one that doesn't.”

Improving reactor design

The findings are relevant to virtually every fusion technology in development, from tokamaks and spheromaks to Z-pinches and inertial confinement fusion devices. "This is relevant to really any thermonuclear fusion device," Dunn says, "where a plasma is heated to very high temperatures to facilitate nuclear fusion reactions."

Researchers use computational models to inform the design of experimental devices, and studies have shown these simulations can have a significant impact on energy output. The problem with current state-of-the-art models is that they don't capture the particle-scale, non-equilibrium effects Dunn is investigating – situations where particles behave in complex ways rather than settling into a simple average state.

"People have shown that it's not a small effect," Dunn notes, "so it can have an impact on the order of 10% on the predictive capabilities of the models."

"We're well along the path to net energy gain," says Professor Uri Shumlak, Dunn's faculty adviser. "Increasing our modeling fidelity improves our ability to understand experimental observations and our predictive accuracy, which could mean the difference between a reactor design that works and one that doesn't."

Of his work, Dunn says, "I have learned to enjoy the process even when the research gets really abstract. I enjoy the math and physics for what they are. Seeing a simulation deliver results, either expected or unexpected, is really satisfying."